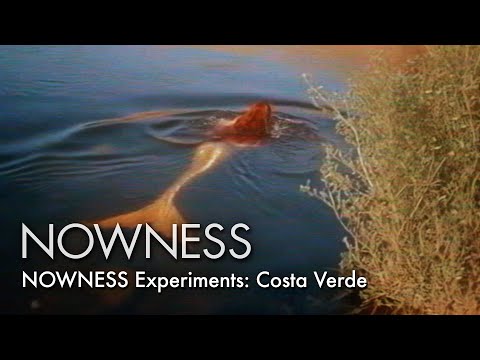

Generating Photorealistic Video with AI

Jon Warlick

A wedding videographer from North Carolina creates what may be the first AI-generated video. Using NVIDIA's GauGAN, a tool that turns simple color-coded sketches into photorealistic landscape images, Warlick paints a landscape in GIMP, animates a camera movement through it in After Effects, exports every single frame, feeds each one individually through GauGAN, then reassembles the AI-transformed frames back into video. The result is flickering, dreamlike, and unmistakably alien. Three years before CogVideo, one person with patience and ingenuity found a way to make AI move.

Made with NVIDIA GauGAN